Real-time neuromorphics with Norse

Jens Egholm Pedersen

- <jeped@kth.se>

- @jensegholm

- https://neurocomputing.systems

| 5' | Numerical acceleration of biological simulations | |

| 10' | Neuromorphic engineering with Norse | |

| 30' | Demonstration of real-time applications

Event-based camera processing

Closed-loop control systems | |

Math: $\dot{v} = \tau_{mem}(v_{leak} - v + i)$

Python: delta_v = tau * (v_leak - old_v + i)

import torch

import norse.torch as snn

import norse.torch as snn snn.LICell()

snn.LICell()

LICell(p=LIParameters(tau_syn_inv=tensor(200.), tau_mem_inv=tensor(100.), v_leak=tensor(0.)), dt=0.001)

snn.LIFCell()

Norse is built on PyTorch who works with tensors.

torch.tensor([0])

tensor([0])

torch.tensor([0])

data = torch.ones(10)

torch.zeros(2, 2)

tensor([[0., 0.],

[0., 0.]])

1torch.ones(8)

tensor([1., 1., 1., 1., 1., 1., 1., 1.])

data = torch.ones(10)

Could be anything: vectors, matrices (cameras), high-dimensional data such as EEG recordings, etc.

We can check the shape with .shape

We can now take our data (tensor) and apply it to our model (neuron dynamics).

data = torch.ones(1)

model = snn.LICell()

model(data)

(tensor([0.], grad_fn=<AddBackward0>), LIState(v=tensor([0.], grad_fn=<AddBackward0>), i=tensor([1.])))

snn.LIFCell()(torch.ones(1))

output, state =model(data)

state

LIState(v=tensor([0.], grad_fn=<AddBackward0>), i=tensor([1.]))

output_values, output_state = snn.LICell()(data)

snn.LIFCell()(torch.ones(1))

(tensor([0.], grad_fn=<CppNode<SuperFunction>>), LIFFeedForwardState(v=tensor([0.], grad_fn=<AddBackward0>), i=tensor([1.])))

snn.LIFCell()(data)

model = snn.LIFCell()

data = torch.ones(1)

state = None

for t in range(100):

output, state = model(data, state)

print(output)

tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([0.], grad_fn=<CppNode<SuperFunction>>) tensor([1.], grad_fn=<CppNode<SuperFunction>>)

model

state = None

data = torch.tensor(1)

state = None

for t in range(100):

model

Assuming time-series data, we can simulate it all at once.

data = torch.ones(100, 1)

snn.LIF()(data)

(tensor([[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.],

[0.],

[0.],

[1.]], grad_fn=<StackBackward0>),

LIFFeedForwardState(v=tensor([0.], grad_fn=<AddBackward0>), i=tensor([5.0000])))

data

model = snn.LIF()

output, state = model(data)

output```

Note the difference between LIFCell (without time) and LIF.

PyTorch convention.

Our models have so far been running on the CPU - but it is simple to put them on the GPU.

model = snn.SequentialState(

snn.Lift(torch.nn.Conv2d(1, 3, 5, padding=2)),

snn.LIF(),

torch.nn.Flatten(1),

torch.nn.Linear(30000, 10),

torch.nn.Sigmoid()

)

data = torch.ones(100, 1, 1, 100, 100)

model = snn.SequentialState(

snn.Lift(torch.nn.Conv2d(1, 3, 5, padding=2)),

snn.LIF(),

torch.nn.Flatten(1),

torch.nn.Linear(30000, 10),

torch.nn.Sigmoid()

)

data = torch.ones(100, 1, 1, 100, 100)

model = snn.SequentialState(

snn.Lift(torch.nn.Conv2d(1, 3, 5, padding=2)),

snn.LIF(),

torch.nn.Flatten(1),

torch.nn.Linear(30000, 10),

torch.nn.Sigmoid()

).cuda()

data = torch.ones(100, 1, 1, 100, 100).cuda()

%timeit model(data)

15.4 ms ± 23.5 µs per loop (mean ± std. dev. of 7 runs, 1 loop each)

%timeit model(torch.ones(100, 1, 1, 100, 100))

model = model.cpu()

data = data.cpu()

%timeit model(data)

36 ms ± 2.28 ms per loop (mean ± std. dev. of 7 runs, 10 loops each)

model = model.cuda() data = data.cuda()

%timeit model(data)

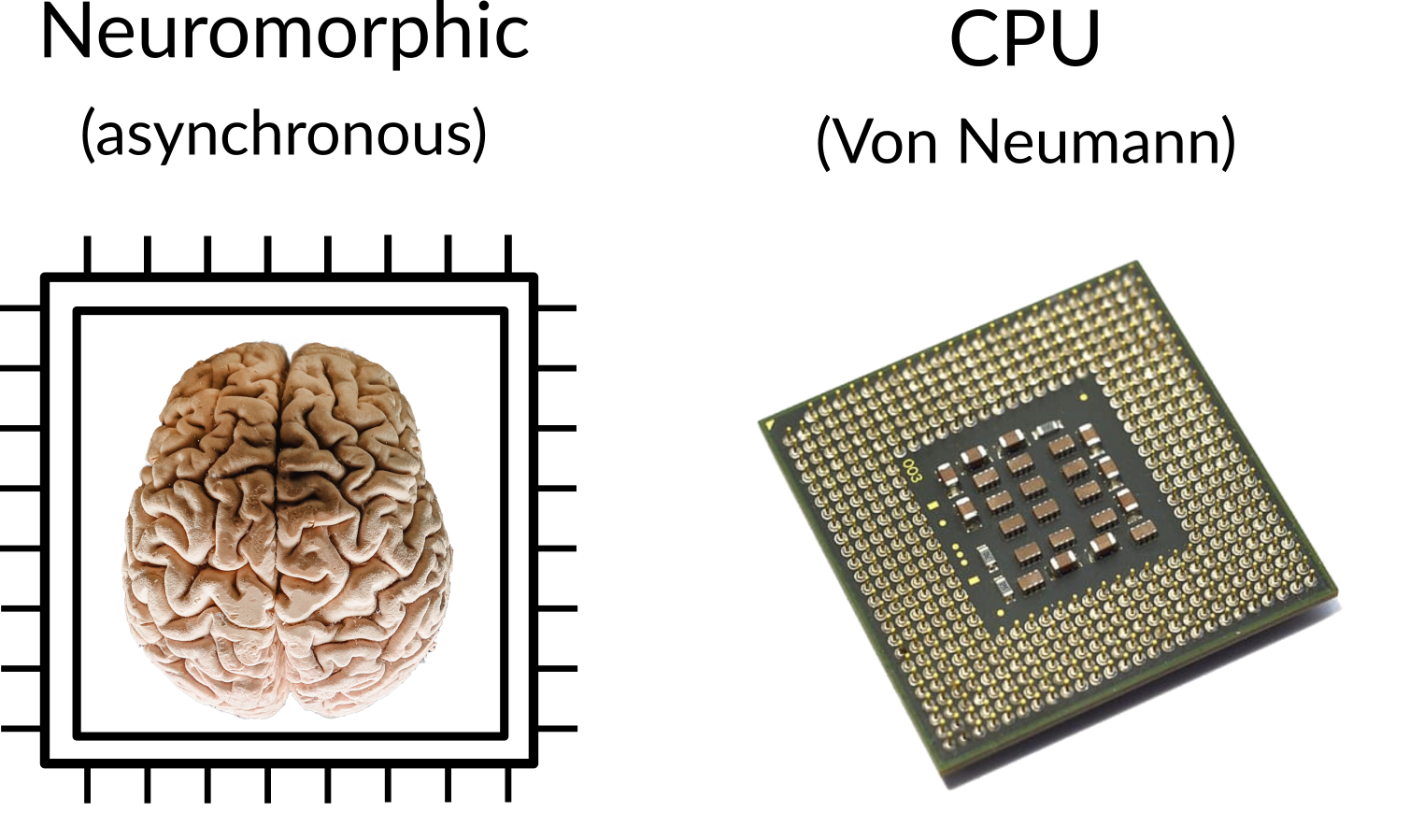

Neuromorphic sensing is fundamentally different because they operate with sparse data.

Event-based cameras detect luminosity changes over time.

(Source: Wikipedia)

(Source: Wikipedia)

Sparse: event-based - only when an event occurs => fast and low processing

Frameless: high time resolution

Other benefits: high dynamic range, low energy consumption, etc.

We need an efficient tool: event cameras emit millions of events per second.

Aestream - address-event streaming library: https://github.com/norse/aestream

import aestream

with aestream.DVSInput((640, 480)) as dvs:

dvs.read() # Gives us a tensor

By convolving our input signal with an edge detection kernel, we can single out oriented edges:

import matplotlib.pyplot as plt

kernel = (torch.linspace(-10, 10, 10).sigmoid().diff() - 0.14).repeat(9, 1)

field

kernel = field.repeat(9, 1)

plt.imshow(kernel)```

kernels = torch.stack([kernel, kernel.T])

convolution = torch.nn.Conv2d(1, 2, 9)

convolution.weight = torch.nn.Parameter(kernels)

kernels

convolution = torch.nn.Conv2d(1, 2, 9)

convolution = torch.nn.Parameter(kernels)```

How do we remove noise?

(Source: Wikipedia)

Can we use the refractory period?

noise_filter = snn.LIFRefracCell()

noise_filter = snn.LIFRefracCell()

model = snn.SequentialState(

noise_filter,

convolution

)

model

noise_filter,

convolution

)```

state = None

with aestream.DVSInput((640, 480)) as dvs:

data = dvs.read()

output, state = model(data, state)

model = snn.SequentialState(

noise_filter,

convolution

)

with aestream.DVSInput((640, 480)) as dvs:

# Read the data

data = dvs.read()

# Run the model (with the previous state)

output, state = model(data, state)

%%html

<div align="middle">

<video width="80%" controls autoplay loop>

<source src="2209-edge-detection.mp4" type="video/mp4">

</video></div>

Full code is available online: https://github.com/norse/aestream

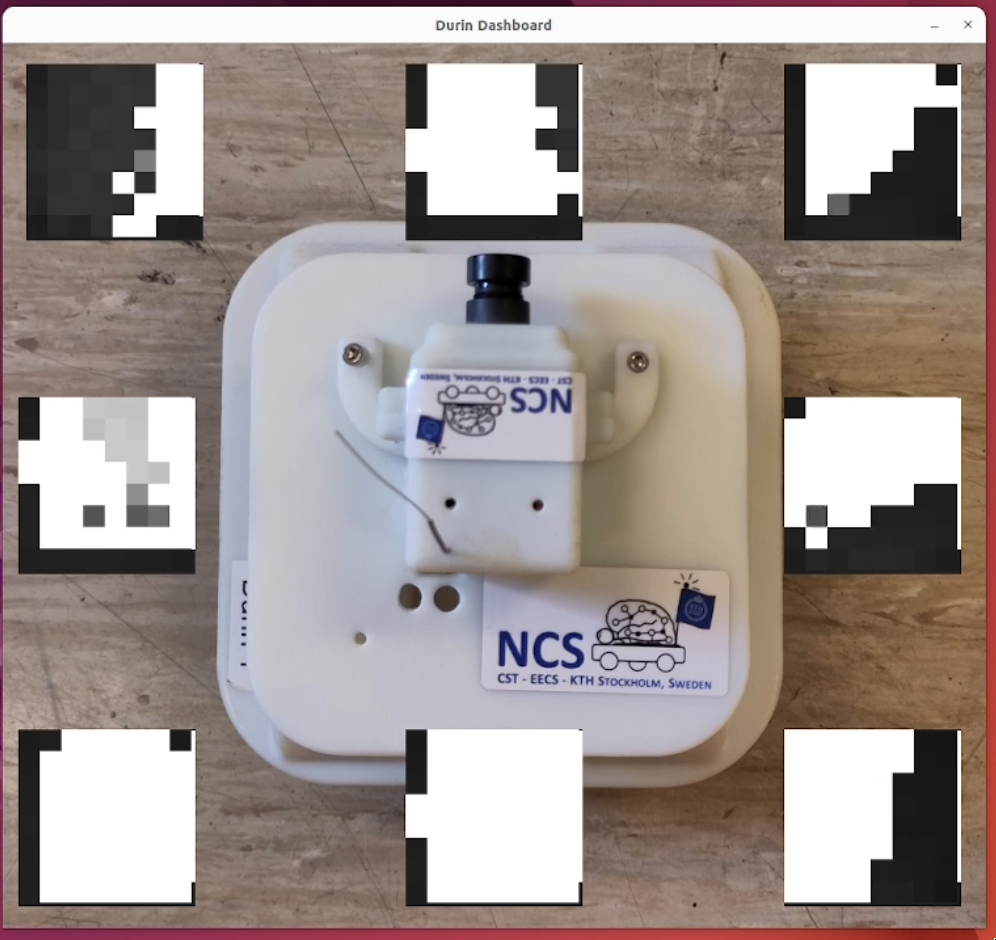

https://github.com/ncskth/durin

Durin has 8 Time-of-Flight sensors, in an 8x8 array:

from durin import DurinUI

with DurinUI("durin5.local") as durin:

(obs, dvs, cmd) = durin.read()

obs.tof # Tof sensor data

left = obs.tof[1, 3, 3]

right = obs.tof[6, 3, 3]

data = torch.tensor([left, right])

p = snn.LIParameters(tau_mem_inv=100)

network = snn.LICell(p)

p = snn.LIParameters(tau_mem_inv=100)

network = snn.LICell(p)

state = None

output, state = network(data, state)

left, right = output

command = MoveWheels(left, right, right, left)

durin(command)

# Update the network with the input and read the output

output, network_state = network(input_tensor, network_state)

left, right = output # Unpack

# NE NW SW SE

command = MoveWheels(left, right, right, left)

durin(command) # Send the command to Durin

from durin import DurinUI

with DurinUI("durin5.local") as durin:

# Extract tof sensor data

(obs, dvs, cmd) = durin.read()

left = obs.tof[1, 3, 3]

right = obs.tof[6, 3, 3]

data = torch.tensor([left, right])

# Update the network with the input and read the output

output, network_state = network(data, network_state)

left, right = output # Separate left and right outputs

command = MoveWheels(left, right, right, left)

durin(command) # Send the command to Durin

%%html

<div align="middle">

<video width="80%" controls autoplay loop>

<source src="2209-durin-braitenberg.mp4" type="video/mp4">

</video></div>

Full example available here: https://github.com/ncskth/durin/

Work is ongoing to run Norse models on

Thank you!